Microservices is a software development approach that structures an application as a collection of loosely coupled and independently deployable services. Instead of building monolithic applications, where all functions are bundled into a single program, microservices break down the application into modular services that can be developed, deployed, and scaled independently.

Microservices architecture gained popularity for its ability to create flexible, scalable, and maintainable applications. However, its implementation comes with several key decisions and challenges. In this blog, we’ll dive into some fundamental questions you should consider when creating a microservices architecture and explore the different communication strategies between microservices.

Breaking the concept of microservices

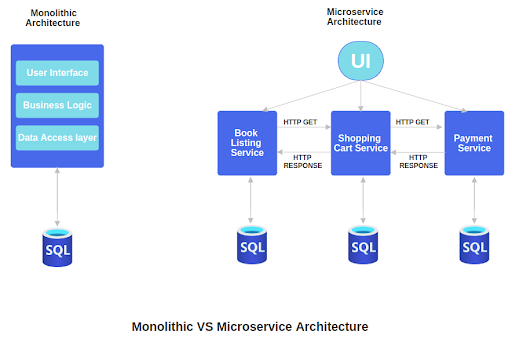

Imagine you want to build a digital online booking store, where people can buy or borrow books. We have two ways to build it:

- Monolithic Approach, where all components are tightly integrated in a single application.

- Microservice Approach, which decomposes the system into smaller, independent, deployable services for scalability and flexibility.

Monolithic Approach

In this approach, we create a single, big program that does everything: website design, listing books, authenticating users, shopping cart, payment and borrow processing. It would be like a massive shopping mall with all the shops, checkout counters, and parking in one giant building.

Microservices Approach

In this approach, we create the online store as a collection of smaller independent services. It would be like a city where each store, checkout station, and parking lot is its separate area. Instead of a giant mall, the city can have individual shops like a bakery, bookstore, and cafe. These standalone buildings can work together to create a diverse and interconnected shopping experience.

Each service focuses on one specific thing and can work on its own. For example

-

Book listing service

This service handles showing the books available for sale. It knows all about books and their availability with their details and prices

-

Shopping Cart service

This service is responsible for keeping track of customers’ items in their shopping carts or borrowing bookings.

-

Payment service

This service handles processing payments when customers decide to buy or borrow the books.

Now imagine when a customer visits an online bookstore:

- People start by looking for books ( Book listings service )

- Users put some books in their cart ( Shopping cart service )

- When they are ready to buy, they make payment ( Payment service )

These three services communicate with each other to make sure everything works smoothly. For example, if the Payment service has an issue, it can be fixed without affecting the whole system or services as booking list and shopping cart services work fine for users.

So, microservices are like breaking a big task into smaller, specialized jobs, making it easier to build, change, and grow your online store. However, we need to plan how these three smaller services or parts communicate with each other and manage the complexity.

Building Microservices: Key Questions & Communication Strategies

1. How to break down the application?

It is the most crucial step in building a microservices architecture. Instead of one extensive program, we use several more minor applications which are self-contained. It is essential to identify distinct business functionality within an application as Microservices focuses on a specific business capability, i.e. user authentication, product catalog payment processing, and so on.

2. How many services do we create?

There could be any number of microservices depending on the complexity of applications. We need to aim for a balance between granularity and manageability. Creating too few microservices can lead to a monolithic-like architecture while creating too many can increase operational overhead. Hence, It is essential to strike the right balance for specific use cases or the business module.

3. How big or small should they be?

Services should be small and focused on a single business capability as the size of a microservice matters. A microservice should do one thing and do it well. If a microservice becomes too large, it can lead to complexity and make it challenging to maintain and scale.

4. How do they communicate? The right choice

Communication between microservices is a critical aspect. There are three primary communication strategies of a microservices architecture.

-

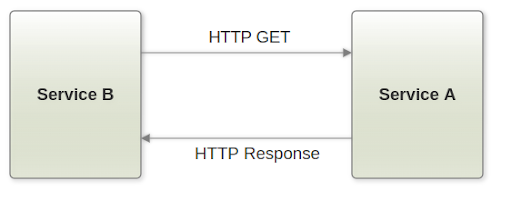

Communication via API Calls

Microservices communicate with each other through well-defined APIs, often using HTTP. This synchronous communication allows one microservice to request data or actions from another. It’s suitable for scenarios where real-time responses are required.

- Service B initiates an HTTP GET request to the Service A endpoint and the request is sent over the network to Service A.

- Service A receives the HTTP request, and processes and generates an HTTP response.

- The HTTP response is sent back over the network to Service B then extracts and processes the data from the response.

Use this for real-time, request-response interactions between microservices. It’s suitable for scenarios where low latency is crucial.

-

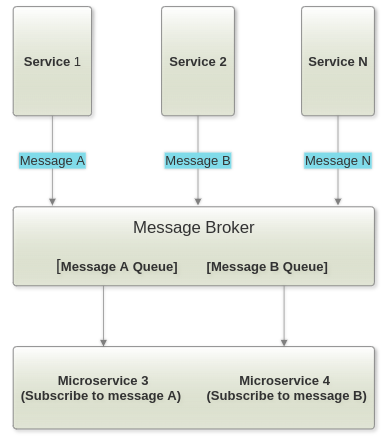

Communication via a message broker

In some cases, asynchronous communication is more appropriate. A message broker, such as RabbitMQ or Apache Kafka, can be used to facilitate event-driven communication. Microservices can publish events, and other microservices can subscribe to those events. This approach is beneficial for handling tasks that can be deferred and for building resilient systems.

- Each box represents a microservice labeled with its name (e.g., Service 1, service 2, etc.).

- Arrows labeled with specific message names (e.g., Message A, Message B, Message N) represent messages being sent from one microservice to the message broker.

- The Message Broker is in the center and has separate queues for each message type (e.g., Message A Queue, Message B Queue).

- Arrows connect microservices to the message broker to show message publication and subscription.

- Microservice 3 and Microservice 4 are shown as consumers subscribed to specific message types.

Opt for this when you need asynchronous communication, event-driven architectures, or when you want to decouple microservices. It’s ideal for handling background tasks and building loosely coupled systems.

-

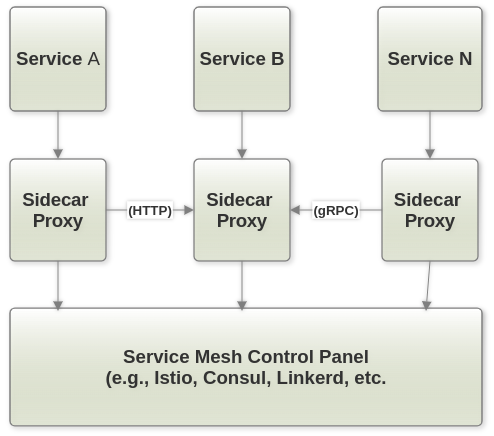

Communication via service Mesh

Service mesh is an infrastructure layer that manages communication between microservices. It provides features like load balancing, traffic routing, and security. Tools like Istio and Linkerd are commonly used for service mesh implementation. This approach simplifies communication management and adds resilience to microservices.

- Each box represents a microservice (Service 1, Service 2, Service N).

- Each microservice has a “Sidecar Proxy” denoted by the “Sidecar Proxy” box, representing proxies like Envoy or Linkerd.

- Arrows indicate the type of communication protocol (HTTP, gRPC) between microservices and their respective sidecar proxies.

- The “Service Mesh Control Plane” is at the bottom, which manages and controls the traffic and communication between microservices.

Consider this for managing communication at the infrastructure level. Service mesh simplifies tasks like load balancing, authentication, and monitoring, making it valuable for complex microservices environments.

Scaling

Horizontal Scaling / scale-out:

Adding more instances of a service to handle increased load.

How it works:

- Deploy with additional instances of a service on different servers or containers. It improves fault tolerance as if one instance fails, others can continue to serve requests.

- Each instance operates independently and can handle requests and workloads which is cost-effective as it is possible to use commodity hardware or cloud instances, adding them as needed.

- Load balances are often used to distribute incoming requests evenly among the instances which makes it scalable, and helps in easy adaptation to changes in traffic by adding or removing instances.

Vertical Scaling:

They are increasing the resources (CPU, RAM) of individual service instances.

How it works:

- Possible to upgrade the existing server or container running the microservice by adding more CPU cores, RAM, or other resources. Simplified management has fewer instances to maintain compared to horizontal scaling. Vertical scaling may limit how much it can vertically scale a single instance.

- Allows the microservice to handle more requests and workloads without deploying multiple instances, As it provides predictable performance improvement with increasing resources directly enhances the capacity of a single instance yet scaling up may require a temporary service interruption ( Downtime risk ).

Choosing the Right Scaling Strategy

The choice between horizontal and vertical scaling depends on specific microservices architecture and requirements:

- Horizontal scaling is often favored for web applications with rapidly changing and unpredictable traffic patterns. It provides great elasticity and fault tolerance.

- Vertical scaling is suitable when you have well-defined, stable workloads, and you want to improve the performance of specific microservices without complicating your infrastructure.

Conclusion

In practice, many organizations use horizontal and vertical combined scaling to optimize their microservices architecture for different parts of their applications, catering to diverse needs within their applications. This hybrid approach allows them to maintain flexibility and cost-efficiency while meeting performance demands.

The effective implementation of microservices relies on the vital adherence to key best practices. These practices encompass emphasizing service isolation for focused business functions, crafting clear and consistent APIs to enhance inter-service communication, implementing comprehensive monitoring and logging to monitor service health and performance, and developing robust error-handling mechanisms for graceful failure management. These combined best practices significantly contribute to the success and resilience of a comprehensive microservices architecture within the ever-evolving realm of software development.

.…………………………

Gurzu is a full-cycle software development company. Since 2014, we have built for many startups and enterprises from all around the world using Agile methodology. Our team of experienced developers, designers, and test automation engineers can help you develop your next product.

Have a tech idea you want to turn into reality? Book a free consulting call.